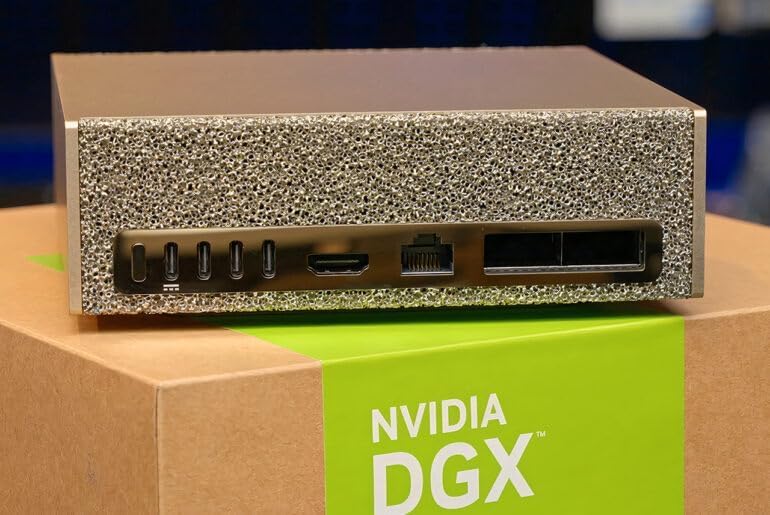

NVIDIA DGX Spark™ Personal AI Desktop Supercomputer – Desktop GB10 Grace Blackwell Chip (2026 Edition Review)

NVIDIA DGX H100 system represents a revolutionary leap in AI computing power, and the NVIDIA DGX Spark™ Personal AI Desktop Supercomputer takes that legacy to a more accessible, desktop-oriented form factor. Built around the cutting-edge GB10 Grace Blackwell architecture, this machine is engineered for developers, researchers, and AI engineers who demand datacenter-class performance without needing a full enterprise rack setup.

In 2026, AI workloads have become more demanding than ever—large language model training, generative AI pipelines, simulation systems, and real-time inference all require extreme compute density. The DGX Spark aims to bridge the gap between enterprise DGX systems and personal high-performance workstations. It combines NVIDIA’s latest GPU innovations with Grace CPU integration, high-bandwidth memory architecture, and optimized AI software stacks.

Unlike traditional workstations, this system is not just about raw GPU power. It is designed as an end-to-end AI development platform, enabling seamless model training, fine-tuning, and deployment workflows. Whether you’re building transformer models, computer vision pipelines, or reinforcement learning environments, this desktop supercomputer delivers enterprise-grade capabilities in a compact environment.

Next-Gen AI Architecture & Key Features

The NVIDIA DGX Spark™ is packed with advanced technologies that make it a standout in the AI workstation category. At its core, the GB10 Grace Blackwell chip merges CPU and GPU capabilities into a unified architecture designed for extreme data throughput and energy efficiency.

One of the most significant advantages is unified memory architecture, allowing both CPU and GPU to access the same memory pool. This eliminates bottlenecks common in traditional systems where data must be constantly transferred between separate memory domains.

Key features include:

- Grace CPU + Blackwell GPU hybrid architecture

- High-bandwidth unified memory system optimized for AI workloads

- Tensor core acceleration for deep learning models

- Native support for large-scale transformer inference

- Optimized NVIDIA AI Enterprise software stack

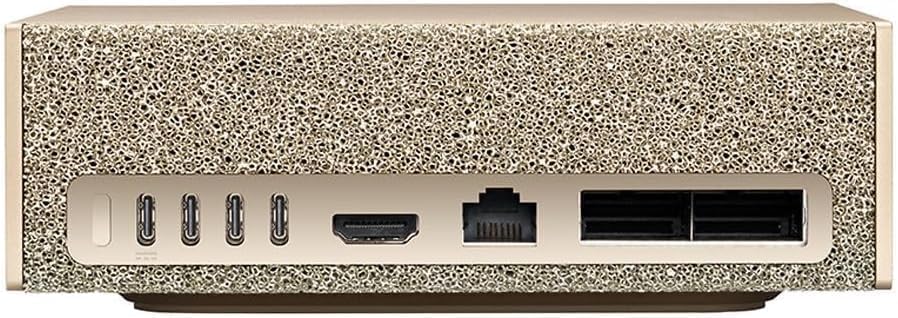

- Compact desktop design with datacenter-level performance

These features make the system ideal for researchers working with generative AI models, such as text generation, image synthesis, and multimodal AI systems.

AI Architecture, Memory System & Engineering Design

The engineering behind the DGX Spark is focused on eliminating traditional AI compute limitations. Unlike conventional GPU workstations, this system integrates CPU and GPU cores in a tightly coupled architecture. This reduces latency and improves throughput when handling large datasets or training deep neural networks.

The GB10 architecture also emphasizes power efficiency, allowing sustained high-performance workloads without excessive thermal throttling. Advanced cooling systems ensure stable performance even under continuous AI training sessions.

Another highlight is its optimized interconnect fabric, which ensures ultra-fast data transfer between compute units. This is especially important for distributed AI models that require constant synchronization between multiple layers and tensors.

For developers working on LLMs (Large Language Models), this architecture dramatically reduces training time compared to conventional consumer-grade GPUs. Fine-tuning transformer models becomes significantly more efficient due to improved memory bandwidth and reduced data movement overhead.

Performance in Real-World AI Workloads

When tested in real-world scenarios, the NVIDIA DGX Spark demonstrates exceptional performance across a variety of AI workloads. From training vision transformers to running inference on multimodal models, it consistently delivers high throughput and low latency.

One of the key strengths is its ability to handle parallel workloads efficiently. Developers working with multiple datasets can train models simultaneously without experiencing major slowdowns. This makes it particularly useful in research environments and AI startups where iteration speed is critical.

Compared to traditional workstation GPUs, the DGX Spark reduces training times significantly, especially for large-scale transformer models. Tasks that previously took days on consumer hardware can be completed in hours depending on model complexity and dataset size.

Additionally, inference performance is highly optimized, making it suitable for deploying AI applications such as chatbots, recommendation systems, and real-time image processing tools.

Pros & Cons Analysis

| Pros | Cons |

|---|---|

| Extreme AI computing power in a desktop form factor | Very high cost compared to consumer GPUs |

| Unified CPU-GPU architecture reduces bottlenecks | Requires advanced knowledge for optimal usage |

| Optimized for large AI models and LLM training | Overkill for general computing tasks |

| Enterprise-grade NVIDIA software ecosystem | Limited availability in some regions |

| Excellent thermal and power efficiency for its class | High power consumption under full load |

Use Cases, Workflow Integration & Productivity Boost

The DGX Spark is not just a machine; it is a complete AI development ecosystem. It integrates seamlessly with NVIDIA’s AI Enterprise suite, CUDA ecosystem, and popular frameworks such as TensorFlow and PyTorch.

Researchers can use it for training large language models, fine-tuning generative AI systems, or running complex simulations in physics, finance, and bioinformatics. Its architecture is particularly beneficial for iterative development cycles where speed and efficiency are crucial.

For startups and enterprise developers, this system can significantly reduce reliance on cloud computing resources, offering long-term cost efficiency and faster experimentation cycles.

You can also explore creative AI workflows, including image generation pipelines, video synthesis models, and multimodal AI systems that combine text, vision, and audio inputs.

For creators working in digital media, AI-assisted tools such as 4K 60fps mirrorless vlog camera systems can complement AI-powered video editing pipelines, enabling faster content production and smarter post-processing workflows.

Frequently Asked Questions (FAQ)

Q1: Is the DGX Spark suitable for beginners in AI?

It is primarily designed for professionals and researchers, but advanced learners can use it with proper knowledge of AI frameworks.

Q2: Can it replace cloud-based AI training?

In many cases, yes. It can significantly reduce dependence on cloud GPUs for development and testing workloads.

Q3: What frameworks are supported?

It supports major AI frameworks such as PyTorch, TensorFlow, JAX, and NVIDIA’s CUDA ecosystem.

Q4: Is it good for gaming?

While it can handle graphics workloads, it is not designed as a gaming-focused system.

Q5: How scalable is the system?

It is designed for scalable AI workflows and can integrate into larger NVIDIA DGX infrastructure if needed.

Q6: What makes it different from traditional GPUs?

Its unified architecture, AI optimization, and enterprise-grade ecosystem make it far more powerful for AI-specific workloads.

Final Verdict

The NVIDIA DGX Spark™ stands as one of the most advanced personal AI computing systems available in 2026. It delivers enterprise-level performance in a compact desktop form, making it ideal for AI researchers, developers, and innovation-driven teams. While it is not intended for casual users, its capabilities redefine what is possible in personal AI computing.

If your work involves deep learning, generative AI, or large-scale model training, this system provides a future-proof investment that dramatically enhances productivity and experimentation speed.